Experimentation

Scaling impact through experimentation

How I built a multi-year learning system that improved decision-making, conversion, and product quality at Civitatis.

For years, Civitatis operated with a high-traffic digital product, multiple lines of business (B2C, B2B, mobile), and a very demanding roadmap. The challenge was not to "design screens"; it was to reduce uncertainty, make better decisions, and optimize a complex funnel without slowing down.

The real problem:

- Decisions depended too much on intuition, urgency, or political pressure

- Significant changes were made without validating their impact

- Learning was neither centralized nor cumulative

My goal was not to conduct experiments. It was to build organizational capacity: a system of experimentation.

As Principal Product Designer IC, I took full ownership of:

- Designing the end-to-end experimentation framework

- Integrating data (GA, ContentSquare) into product decisions

- Defining prioritization criteria and processes (RICE, ICE)

- Designing and prototyping experimental variations

- Configuration and monitoring in ABTasty, Optimizely

- Alignment with Product Managers, Tech Leads, and stakeholders

- Documenting learnings and their strategic implications

- Maintaining governance, methodological rigor, and avoiding false positives

My approach was clear: create a stable, predictable, and scalable pipeline.

As Principal Product Designer IC, I owned the full experimentation lifecycle — from opportunity discovery and hypothesis framing to test design, configuration in ABTasty and Optimizely, statistical governance, and knowledge documentation. I worked in close partnership with PMs, Tech Leads, and stakeholders to build a stable, predictable, and scalable pipeline.

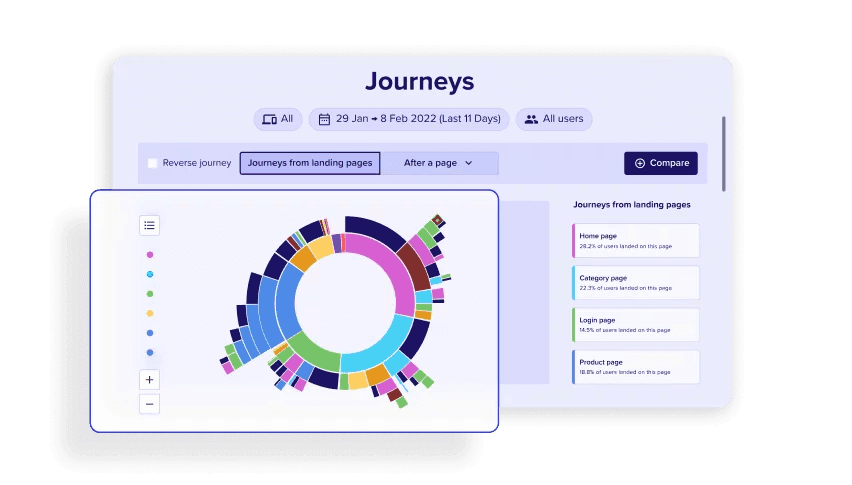

- Quantitative analysis: conversions, drop-offs, critical events. Identifying where users struggle through measurable data points.

- Qualitative analysis: session recordings, heatmaps, and behavioral patterns to understand the "why" behind the numbers.

- Friction point identification: reviewing recurring issues to build an evidence-based backlog of opportunities.

It is essential to work with a well-founded hypothesis, as it is the basis of the experiment, where we will make strategic decisions before launching the test.

Hypothesis definition

A clear, testable statement: "Because we know [evidence], we believe that [change] will cause [outcome], which we will measure by [metric]."

Metrics

Primary and secondary KPIs that will determine success, along with guardrail metrics to detect unintended negative effects.

Statistical criteria

Confidence level, minimum detectable effect (MDE), and statistical power required to draw reliable conclusions.

Traffic considerations

Sample size calculations, traffic allocation strategy, and segmentation to ensure the experiment reaches statistical significance.

Test duration

Minimum runtime to account for weekly cycles, seasonality, and sufficient data collection — avoiding premature conclusions.

Experiment design

Control vs. variant structure, the specific change being tested, and how to isolate the variable to ensure clean results.

User experience risks

Potential negative impacts on usability, accessibility, or user trust — and mitigation strategies for each.

Dependencies

Technical, design, or cross-team dependencies that must be resolved before launch to avoid blockers or contaminated results.

Instrumentation & tracking

Events, tags, and analytics setup required to accurately measure the experiment outcomes and capture behavioral data.

Risks & assumptions

Explicit documentation of what we assume to be true and the risks if those assumptions prove wrong.

Stop/Go criteria

Pre-defined conditions under which we will stop the experiment early (e.g., significant negative impact) or proceed to full rollout.

Roles & Orchestration

In close partnership with the PM, I played a key role in driving cross-disciplinary alignment and ensuring coherence across the workstream:

Design

Hypothesis framing, UX flows, interface decisions, and system consistency.

Product

Opportunity sizing, KPI definition, and business risk assessment.

Engineering

Feasibility reviews, performance considerations, and technical implications.

Data

Segmentation strategy, significance analysis, and experiment validation.

Stakeholders

Driving alignment while avoiding bias-driven compromises.

Quality criteria

I applied methodological rigor to ensure each experiment was statistically sound, interpretable, and actionable.

Sufficient traffic

Sample size calculations to ensure the experiment reaches statistical significance and results are representative.

Minimum duration

Runtime accounting for weekly cycles and seasonality, avoiding premature conclusions from insufficient data.

Scoped changes

Isolating a single variable per test to ensure clean attribution of results to the specific change made.

Statistical validity

Confidence levels and minimum detectable effect thresholds to draw reliable, actionable conclusions.

Experimental integrity

Guardrails against data contamination, audience overlap, and external factors that could compromise results.

This framework represents the ideal process. In practice, business urgency, technical constraints, or limited traffic meant we had to adapt — but having the full system as a reference ensured we always knew what we were trading off.

I designed a 7-phase experimentation cycle — from opportunity discovery and hypothesis generation through implementation, analysis, and centralized knowledge management. Each experiment followed a structured template covering metrics, statistical criteria, traffic requirements, UX risks, and stop/go criteria. Quality guardrails (sufficient traffic, minimum duration, scoped changes, statistical validity) ensured every result was reliable and actionable.

This framework represents the ideal process. Business urgency or technical constraints sometimes meant adapting — but having the full system ensured we always knew what we were trading off.

Context

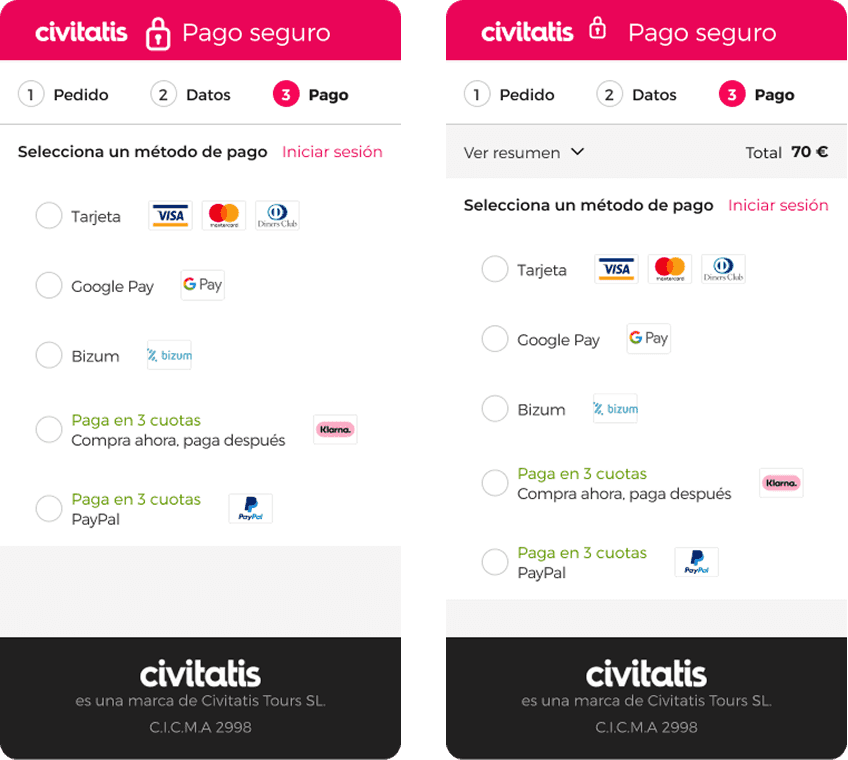

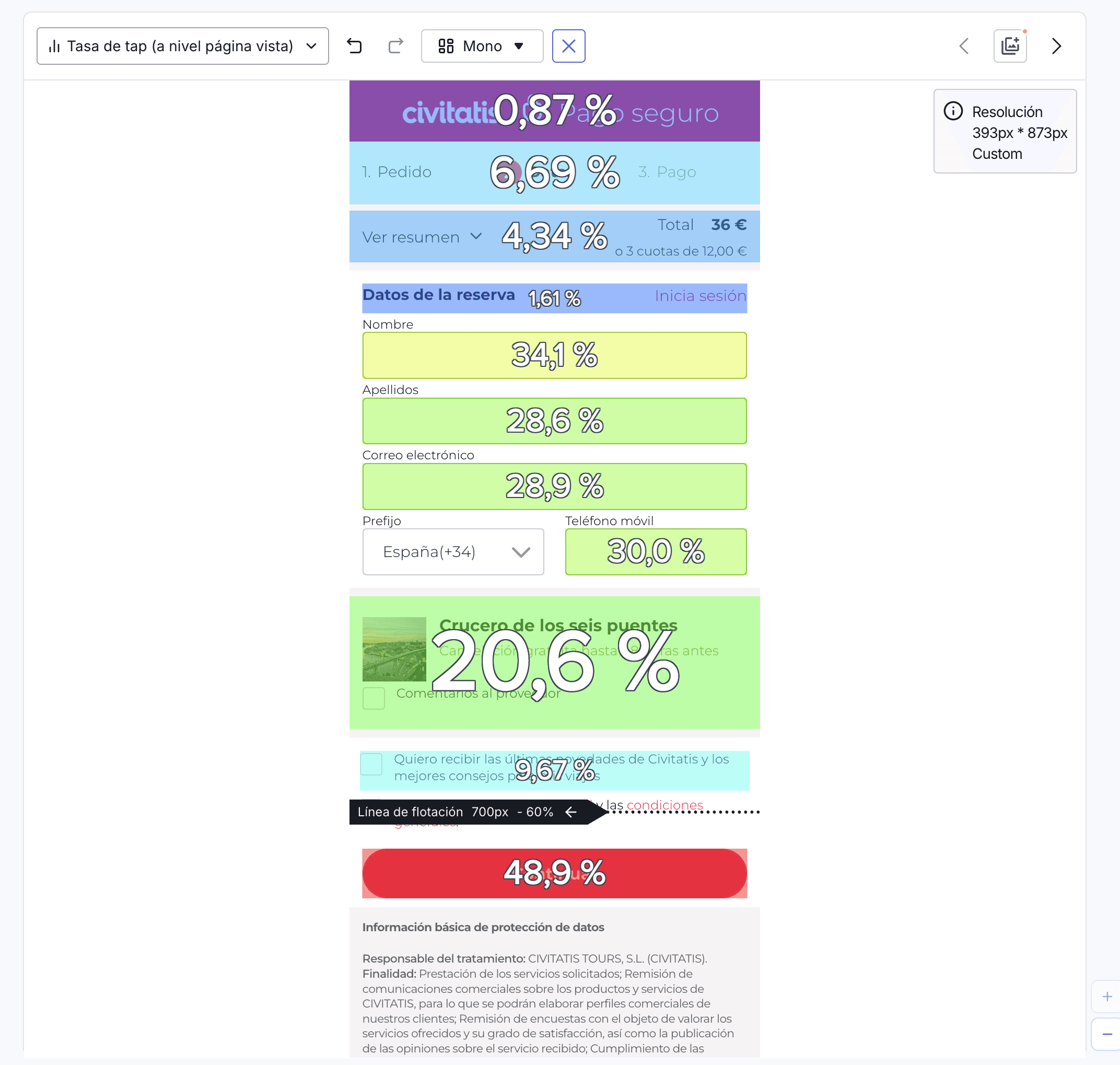

After analyzing user behavior through journeys, session recordings, and heatmaps, we identified a clear pattern: once users reached the payment page, many navigated back to review the cart details, creating uncertainty and increasing the likelihood of checkout abandonment.

Hypothesis

Because we know that conversion on mobile is much lower than on desktop, we believe that by showing the purchase summary on mobile checkout pages, the user will have less uncertainty, reduce the abandonment rate, and increase conversion.

"As a user, seeing what I'm going to pay will give me more confidence, reduce my uncertainty, and I'll complete the purchase securely."

Key learnings

- Uncertainty at checkout is a critical barrier on mobile

- Impactful improvements don't always require complex redesigns — reducing uncertainty can be enough

- Strengthen a mobile-first approach across the conversion funnel

Strategic implications

- Strengthen a mobile-first approach across the conversion funnel

- Position transparency as a core pillar of the checkout experience

- Establish an ongoing strategy focused on friction removal

- Validate the opportunity to scale the solution

- Embed behavioral analytics as a standard input for CRO and product design

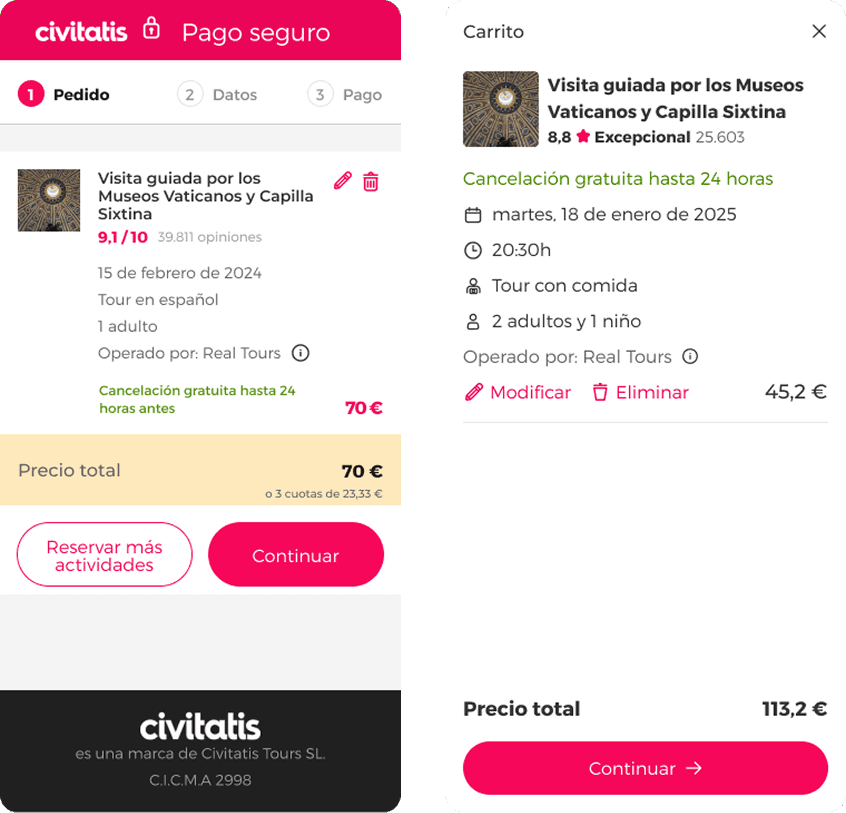

Context

In our efforts to improve the checkout process conversion rate, we noticed that users tended to continue browsing after reaching the shopping cart. 18% of sessions returned to the product detail page, 10% clicked on "Reserve more", and many others clicked on the logo. None of them returned to complete the purchase.

Hypothesis

Because we know that users navigate away from the cart page and do not return to complete their purchase, we believe that by removing the exit links, we will achieve a higher conversion rate from the page to the next step of the checkout process.

"As a user, without distractions, I will complete the process I started."

Key learnings

- Exit paths on the cart page create distraction and leakage

- Checkout commitment is fragile and easily interrupted

- Strengthen a "focus-first" approach in high-intent stages

- Scale the principle: clarity over optionality

Strategic implications

- Strengthen a "focus-first" approach in high-intent stages

- Re-evaluate all exit points across the funnel

- Scale the principle: clarity over optionality

- Combine this insight with other checkout improvements

- Leverage this experiment as a foundation for iterative refinement

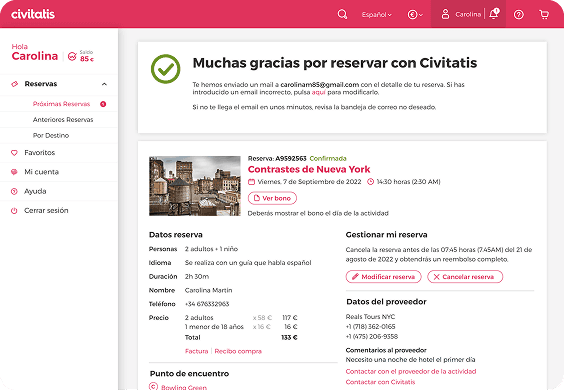

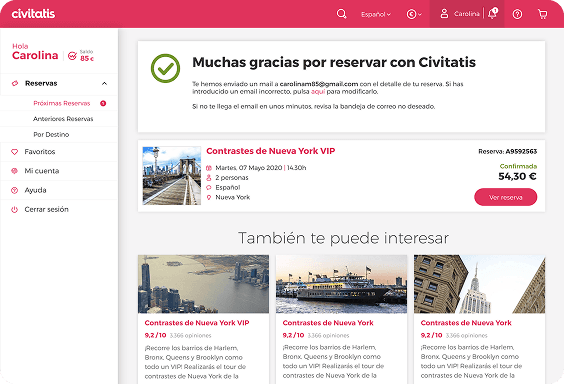

Context

One of the company's OKRs was to increase the number of activities per trip per user. On the thank you page, we saw an opportunity to improve this metric, as we were showing all the booking details and cross-selling was at the bottom of the page.

Hypothesis

Because we know that cross-selling is not being displayed above the fold due to the amount of content, we believe that by reducing the content of the purchase confirmation, cross-selling will be visible at first glance and we will be able to increase the number of activities per trip per user.

"As a user, once I have completed my purchase and received the details by email, I will be able to see other options that may be of interest for my trip."

Since the conversion tag is on the thank you page, we were unable to measure the purchase conversion.

Key learnings

- Cross-selling visibility is a key driver of engagement

- Post-purchase is a high-value moment for complementary discovery

- Adopt a "confirmation minimalism" principle

- Establish cross-selling visibility as a design standard

Strategic implications

- Reposition cross-selling as a core part of the post-purchase experience

- Adopt a "confirmation minimalism" principle

- Establish cross-selling visibility as a design standard

- Explore personalized or dynamic cross-selling models

Reduce cart exits

Removing exit links on the cart page to eliminate distraction at high-intent moments.

Increase products by trip

Moving cross-sell above the fold on the thank-you page by reducing confirmation content.

What I learned

Running experiments taught me more about managing risk than about designing interfaces.

The hardest part was never the variant design — it was figuring out the right question to ask.

We only started getting real value when we stopped celebrating wins and started documenting why things worked.

Having a framework is easy. Getting a team to actually challenge their own assumptions is another thing entirely.

A test result on its own means nothing. It only matters when someone uses it to make a better decision next time.

Without clear ownership and rules, experiments just become a way to justify decisions already made.

Get in touch

Let's talk.

Open to new opportunities. Based in Madrid, working globally.